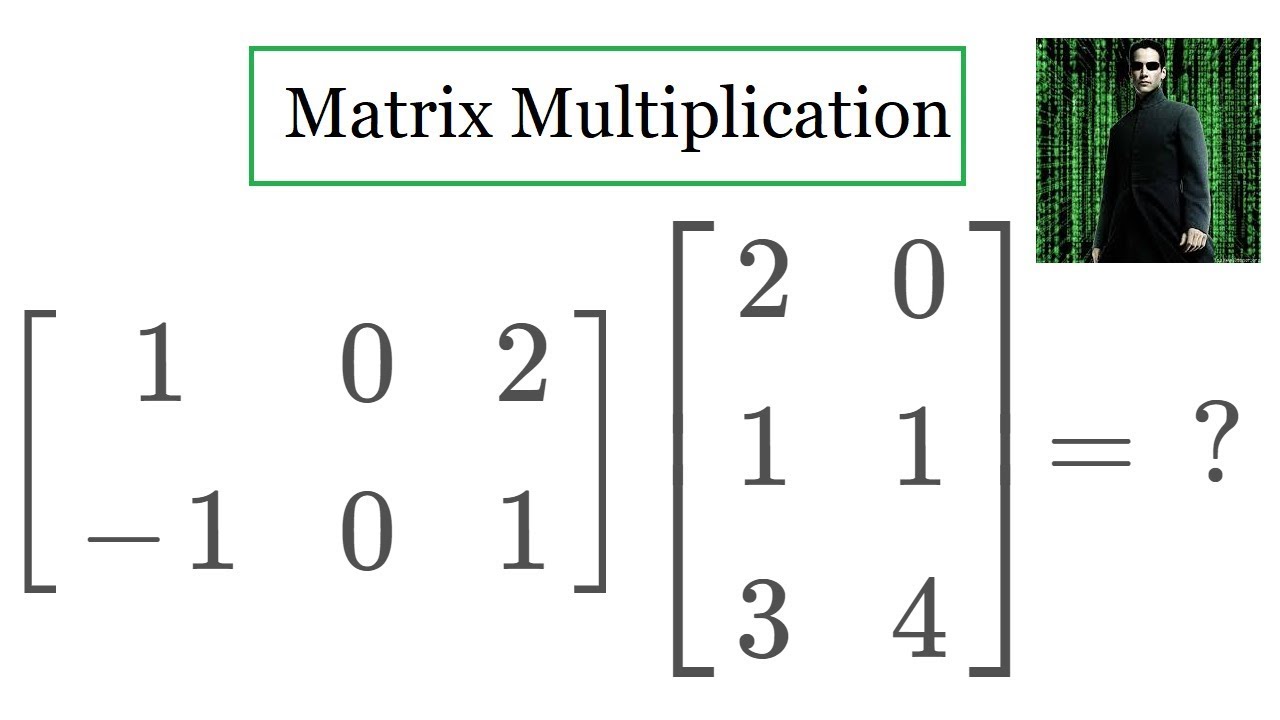

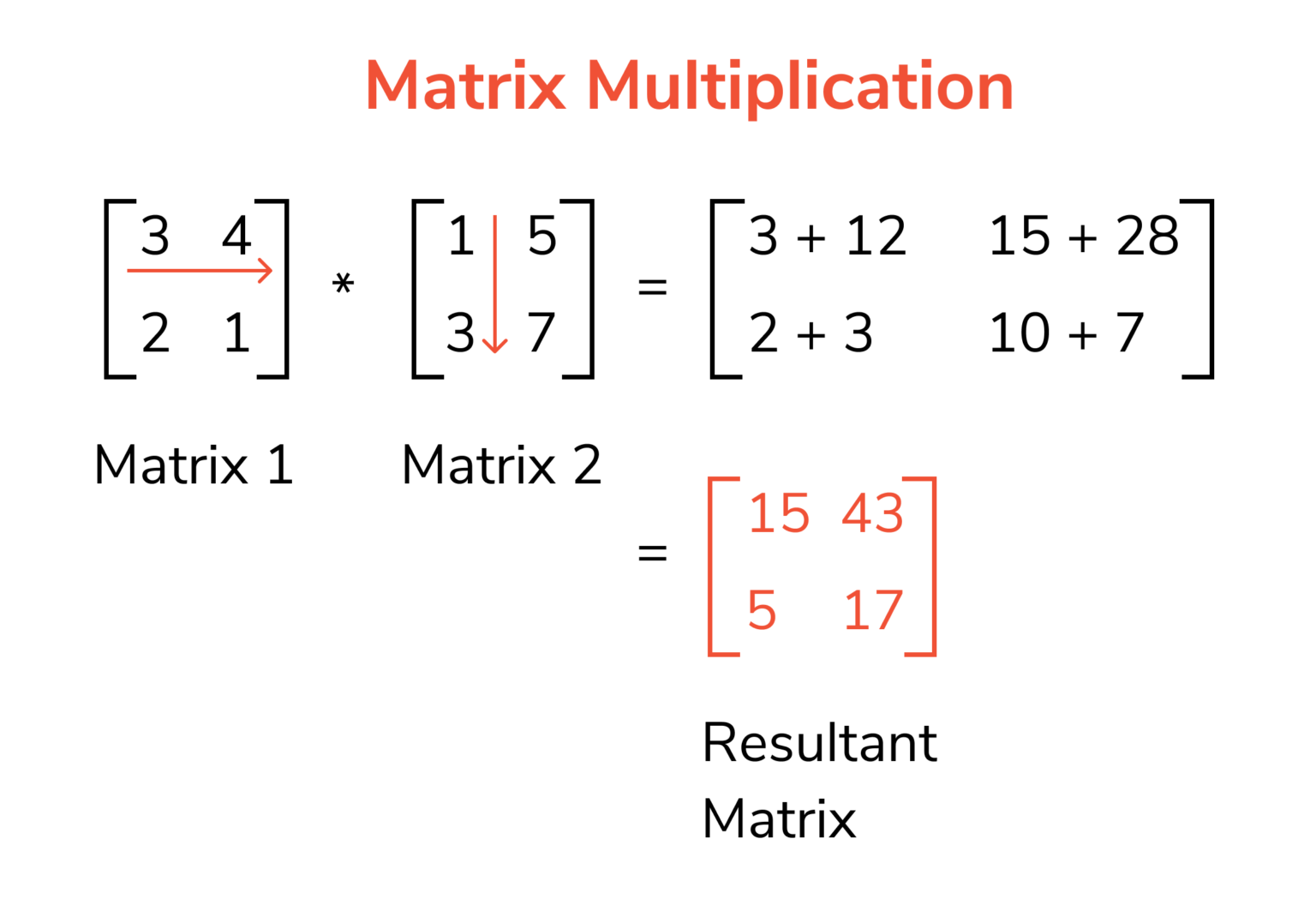

a matrix $\mathbf$, each of which can be computed in $O(n^2)$ time (and thus, $O(n^2)$ time overall). We will be using notation that is consistent with array notation. So, if A is an m × n matrix, then the product A x is. Let us define the multiplication between a matrix A and a vector x in which the number of columns in A equals the number of rows in x. Simply put, matrices are two dimensional arrays and vectors are one dimensional arrays (or the "usual" notion of arrays). To multiply a row vector by a column vector, the row vector must have as many columns as the column vector has rows. In this note we will be working with matrices and vectors. I have notice a slight time improvement by computing AB instead, which gives a column vector. My question is how to compute: BA efficiently. We will soon see this sum of product corresponds to a very natural problem.įor this note we will assume that the numbers are small enough so that all basic operations (addition, multiplication, subtraction and division) all take constant time. I need to make a matrix/vector multiplication in Matlab of very large sizes: 'A' is an 655360 by 5 real-valued matrix that are not necessarily sparse and 'B' is a 655360 by 1 real-valued vector. If the problem seems too esoteric, just hold on to your (judgmental) horses. If you must ask, the set of number of which this law holds (plus some other requirements) is called a semi-ring. integers, real numbers, complex numbers) work. But for this section pretty much any reasonable set of numbers (e.g.

I am being purposefully being vague about what exactly I mean by numbers. The matrix product ABC just means that A is multiplied to each column vector of B and that the resulting column vectors form the columns of the product matrix C. The video is actually a 2D-DFT and not exactly the DFT as defined above. The definition of matrix-vector multiplication is easily extended to matrix-matrix multiplication.

Since i is used liberally as an index in this note. However, best practice is to insert the explicit multiplication operator into your expressions.The Khan academy video above calls the inner product as just dot product and used the notation x.y instead of, which is what we will use in this note. Note also that implicit multiplication is interpreted based on the operands, and when it can, Maple parses these as follows: For Vector/Matrix operands this will be interpreted as the `.` (dot) non-commutative multiplication operator, while for Array operands this will be interpreted as the elementwise operator: Note that when multiplying Arrays together (not with Vectors or Matrices), the standard multiplication operator will result in the elementwise product, so the `~` is not necessary: To multiply Vectors and/or Matrices and/or Arrays together using elementwise multiplication, use the standard multiplication operator, `*` followed by the "elementwise" operator, `~`: Implicit multiplication (using a space to mean multiplication) can also be ambiguous. To multiply Matrices and/or Vectors together using the standard Linear Algebra multiplication operation, use the non-commutative multiplication operator, `.` (dot): This error results if Matrices, or a Matrix and a Vector, are multiplied using a commutative multiplication operator, `*`:

If instead you want to perform elementwise multiplication, use *~. This display can be modified through the interactive Typesetting Rule Assistant. Note that in 2-D math `*` displays as a center dot: `⋅`, and typing a dot (using the period key) displays as ` (dot) for Vector/Matrix multiplicationĪn expression involving the multiplication of Vectors and/or Matrices (possibly and/or Arrays) has been constructed using the standard multiplication operator, `*`, which is ambiguous. Error, (in rtable/Product) use *~ for elementwise multiplication of Vectors or Matrices use.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed